Job Features¶

Special features installed/configured on the fly on allocated nodes, features are requested in Slurm job using specially formatted comments.

$ salloc... --comment "use:feature=req"

or

SBATCH --comment "use:feature=req"

or for multiple features

$ salloc ... --comment "use:feature1=req1 use:feature2=req2 ..."

where feature is a feature name and req is a requested value (true, version string, etc.)

Xorg¶

Xorg is a free and open source implementation of the X Window System imaging server maintained by the X.Org Foundation. Xorg is available only for Karolina accelerated nodes Acn[01-72].

$ salloc ... --comment "use:xorg=True"

VTune Support¶

Load the VTune kernel modules.

$ salloc ... --comment "use:vtune=version_string"

version_string is VTune version e.g. 2019_update4

Global RAM Disk¶

Warning

The feature has not been implemented on Slurm yet.

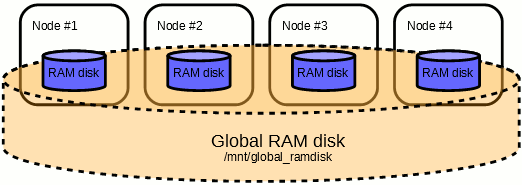

The Global RAM disk deploys BeeGFS On Demand parallel filesystem, using local (i.e. allocated nodes') RAM disks as a storage backend.

The Global RAM disk is mounted at /mnt/global_ramdisk.

$ salloc ... --comment "use:global_ramdisk=true"

Example¶

$ sbatch -A PROJECT-ID -p qcpu --nodes 4 --comment="use:global_ramdisk=true" ./jobscript

This command submits a 4-node job in the qcpu queue;

once running, a RAM disk shared across the 4 nodes will be created.

The RAM disk will be accessible at /mnt/global_ramdisk

and files written to this RAM disk will be visible on all 4 nodes.

The file system is private to a job and shared among the nodes, created when the job starts and deleted at the job's end.

Warning

The Global RAM disk will be deleted immediately after the calculation end. Users should take care to save the output data from within the jobscript.

The files on the Global RAM disk will be equally striped across all the nodes, using 512k stripe size. Check the Global RAM disk status:

$ beegfs-df -p /mnt/global_ramdisk

$ beegfs-ctl --mount=/mnt/global_ramdisk --getentryinfo /mnt/global_ramdisk

Use Global RAM disk in case you need very large RAM disk space. The Global RAM disk allows for high performance sharing of data among compute nodes within a job.

Warning

Use of Global RAM disk file system is at the expense of operational memory.

| Global RAM disk | |

|---|---|

| Mountpoint | /mnt/global_ramdisk |

| Accesspoint | /mnt/global_ramdisk |

| Capacity | Barbora (Nx180)GB |

| User quota | none |

N = number of compute nodes in the job.

Warning

Available on Barbora nodes only.

MSR-SAFE Support¶

Load a kernel module that allows saving/restoring values of MSR registers. Uses LLNL MSR-SAFE.

$ salloc ... --comment "use:msr=version_string"

version_string is MSR-SAFE version e.g. 1.4.0

Danger

Hazardous, it causes CPU frequency disruption.

Warning

Available on Barbora nodes only.

Warning

It is recommended to combine with setting the feature mon-flops=off.

Cluster Monitoring¶

Disable monitoring of certain registers which are used to collect performance monitoring counters (PMC) values such as CPU FLOPs or Memory Bandwidth:

$ salloc ... --comment "use:mon-flops=off"

Warning

Available on Karolina nodes only.

HDEEM Support¶

Load the HDEEM software stack. The High Definition Energy Efficiency Monitoring (HDEEM) library is a software interface used to measure power consumption of HPC clusters with bullx blades.

$ salloc ... --comment "use:hdeem=version_string"

version_string is HDEEM version e.g. 2.2.8-1

Warning

Available on Barbora nodes only.

NVMe Over Fabrics File System¶

Warning

The feature has not been implemented on Slurm yet.

Attach a volume from an NVMe storage and mount it as a file-system. File-system is mounted on /mnt/nvmeof (on the first node of the job). Barbora cluster provides two NVMeoF storage nodes equipped with NVMe disks. Each storage node contains seven 1.6TB NVMe disks and provides net aggregated capacity of 10.18TiB. Storage space is provided using the NVMe over Fabrics protocol; RDMA network i.e. InfiniBand is used for data transfers.

$ salloc ... --comment "use:nvmeof=size"

size is a size of the requested volume, size conventions are used, e.g. 10t

Create a shared file-system on the attached NVMe file-system and make it available on all nodes of the job. Append :shared to the size specification, shared file-system is mounted on /mnt/nvmeof-shared.

$ salloc ... --comment "use:nvmeof=size:shared"

For example:

$ salloc ... --comment "use:nvmeof=10t:shared"

Warning

Available on Barbora nodes only.

Smart Burst Buffer¶

Warning

The feature has not been implemented on Slurm yet.

Accelerate SCRATCH storage using the Smart Burst Buffer (SBB) technology. A specific Burst Buffer process is launched and Burst Buffer resources (CPUs, memory, flash storage) are allocated on an SBB storage node for acceleration (I/O caching) of SCRATCH data operations. The SBB profile file /lscratch/$SLURM_JOB_ID/sbb.sh is created on the first allocated node of job. For SCRATCH acceleration, the SBB profile file has to be sourced into the shell environment - provided environment variables have to be defined in the process environment. Modified data is written asynchronously to a backend (Lustre) filesystem, writes might be proceeded after job termination.

Barbora cluster provides two SBB storage nodes equipped with NVMe disks. Each storage node contains ten 3.2TB NVMe disks and provides net aggregated capacity of 29.1TiB. Acceleration uses RDMA network i.e. InfiniBand is used for data transfers.

$ salloc ... --comment "use:sbb=spec:

spec specifies amount of resources requested for Burst Buffer (CPUs, memory, flash storage), available values are small, medium, and large

Loading SBB profile:

$ source /lscratch/$SLURM_JOB_ID/sbb.sh

Warning

Available on Barbora nodes only.